TL; DR: Autonomous AI Agents — Food for Agile Thought #533

Welcome to the 533rd edition of the Food for Agile Thought newsletter, shared with 35,708 peers. This week, Ezra Klein interviews Anthropic’s Jack Clark on autonomous AI agents that act, not just chat, and they warn about specs, oversight, and security as senior judgment grows in value. Teresa Torres and Petra Wille draw a hard line between product outcomes and engineering quality, and Jing Hu shows domain insiders can beat coders at AI hackathons. Also, Andreas Horn, Daniel Nest, and Pavel Samsonov argue for durable instructions, a living context, and real customer signals before speed.

Next, John Cutler reminds us that shipping creates potential, not outcomes, so treat each release as a hypothesis and trace causal chains from near-term effects to long-term results. Paweł Huryn describes Claude Cowork, a desktop agent that plans work, runs parallel sub-agents, and writes real files with plugins, skills, and MCP, while Benedict Evans questions OpenAI’s moat, and Elena Verna urges an AI native weekly build cadence. Deb Liu ties it together with collaboration habits that widen options.

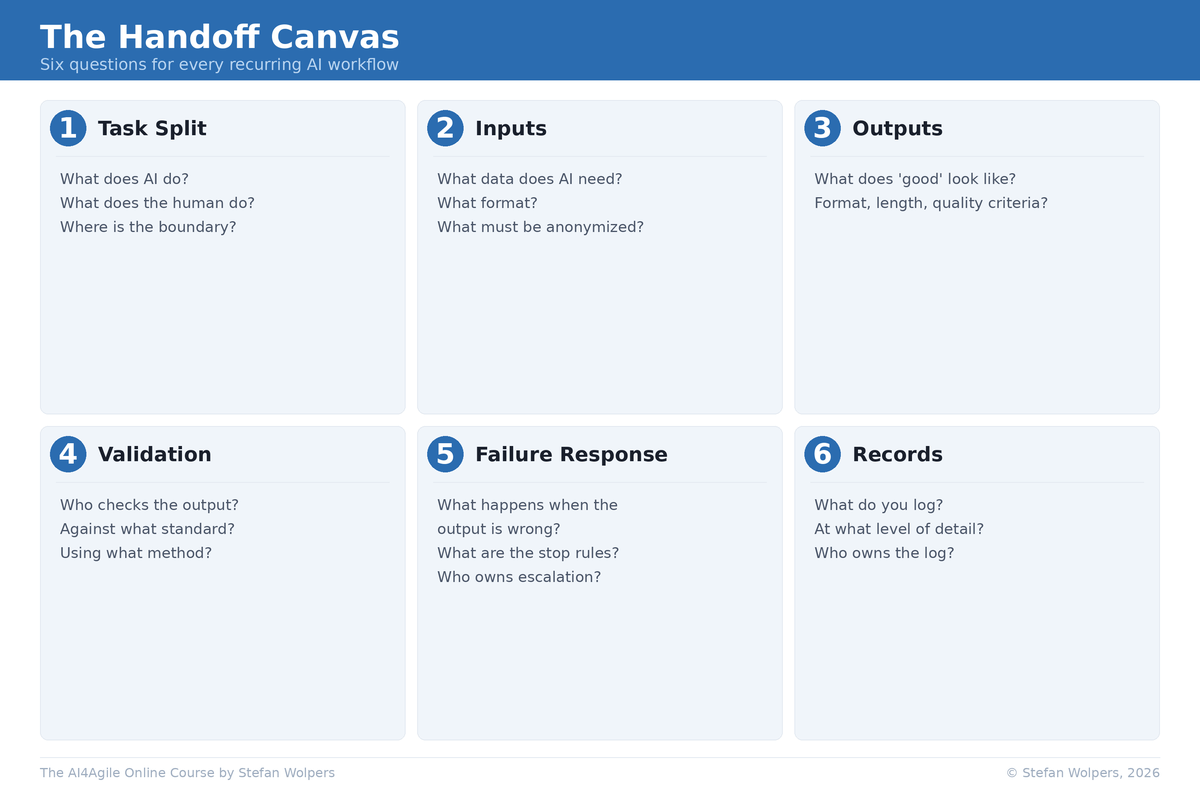

Then, Dror Poleg warns of a jobless boom where GDP rises while hiring stalls, pushing cities toward flexible zoning, conversions, and fiscal tools that spread gains. Zapier frames AI transformation as leadership, culture, tools, and governance that multiply into impact, while Nicole Koenigstein shows multi-agent handoffs compound errors unless you add gates and schemas. Also, Andi Roberts urges friction-based team charters with review cadences. Finally, Anthropic links AI fluency to iteration and tougher evaluation.